Roughly two years ago, I began an investigation that sought to chart the baddest places on the Internet, the red light districts of the Web, if you will. What I found in the process was that many security experts, companies and private researchers also were gathering this intelligence, but that few were publishing it. Working with several other researchers, I collected and correlated mounds of data, and published what I could verify in The Washington Post. The subsequent unplugging of malware and spammer-friendly ISPs Atrivo and then McColo in late 2008 showed what can happen when the Internet community collectively highlights centers of badness online.

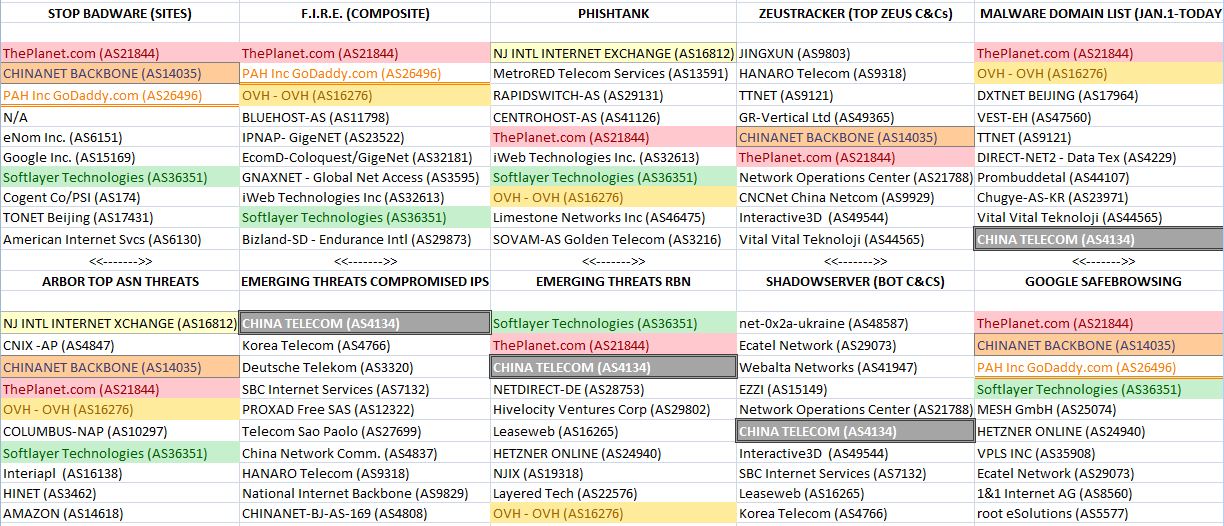

Fast-forward to today, and we can see that there are a large number of organizations publishing data on the Internet’s top trouble spots. I polled some of the most vigilant sources of this information for their recent data, and put together a rough chart indicating the Top Ten most prevalent ISPs from each of their vantage points. [A few notes about the graphic below: The ISPs or hosts that show up more frequently than others on these lists are color-coded to illustrate consistency of findings. The ISPs at the top of each list are the “worst,” or have the most number of outstanding abuse issues. “AS” stands for “autonomous system” and is mainly a numerical way of keeping track of ISPs and hosting providers. Click the image to enlarge it.]

What you find when you start digging through these various community watch efforts is not that the networks named are entirely or even mostly bad, but that they do tend to have more than their share of neighborhoods that have been overrun by the online equivalent of street gangs. The trouble is, all of these individual efforts tend to map ISP reputation from just one or a handful of perspectives, each of which may be limited in some way by particular biases, such as the type of threats that they monitor. For example, some measure only phishing attacks, while others concentrate on charting networks that play host to malicious software and botnet controllers. Some only take snapshots of badness, as opposed to measuring badness that persists at a given host for a sizable period of time.

What you find when you start digging through these various community watch efforts is not that the networks named are entirely or even mostly bad, but that they do tend to have more than their share of neighborhoods that have been overrun by the online equivalent of street gangs. The trouble is, all of these individual efforts tend to map ISP reputation from just one or a handful of perspectives, each of which may be limited in some way by particular biases, such as the type of threats that they monitor. For example, some measure only phishing attacks, while others concentrate on charting networks that play host to malicious software and botnet controllers. Some only take snapshots of badness, as opposed to measuring badness that persists at a given host for a sizable period of time.

Also, some organizations that measure badness are limited by their relative level of visibility or by simple geography. That is to say, while the Internet is truly a global network, any one watcher’s view of things may be colored by where they are situated in the world geographically, or where they most often encounter threats, as well as their level of visibility beyond their immediate horizon.

In February 2009, I gave the keynote address at a Messaging Anti-Abuse Working Group (MAAWG) conference in San Francisco, where I was invited to talk about research that preceded the Atrivo and McColo takedowns. The biggest point I tried to hammer home in my talk was that there was a clear need for an entity whose organizing principle was to collate and publish near real-time information on the Web’s most hazardous networks. Instead of having 15 or 20 different organizations independently mapping ISP reputation, I said, why not create one entity that does this full-time?

Unfortunately, some of the most clear-cut nests of badness online — the Troyaks of the world and other networks that appear to designed from the ground up for cyber criminals — are obscured for the most part from surface data collation efforts such as my simplistic attempt above. For a variety of reasons, unearthing and confirming that level of badness requires a far deeper dive. But even at its most basic, an ongoing, public project that cross-correlates ISP reputation data from a multiplicity of vantage points could persuade legitimate ISPs — particularly major carriers here in the United States — to do a better job of cleaning up their networks.

What follows is the first in what I hope will be a series of stories on different, ongoing efforts to measure ISP reputation, and to hold Internet providers and Web hosts more accountable for the badness on their networks.

PLAYING WITH FIRE

I first encountered the Web reputation approach created by the researchers from the University of California Santa Barbara after reading a paper they wrote last year about the results of their having hijacked a network of drive-by download sites that is typically rented out to cyber criminals. Rob Lemos wrote about their work for MIT Technology Review last fall.

Shortly after the Atrivo and McColo disconnections, the UCSB guys started their own Web reputation mapping project called FInding RoguE Networks, or FIRE.

Shortly after the Atrivo and McColo disconnections, the UCSB guys started their own Web reputation mapping project called FInding RoguE Networks, or FIRE.

Brett Stone-Gross, a PhD candidate in UCSB’s Department of Computer Science, said he and two fellow researchers there sought to locate ISPs that exhibited a consistently bad reputation.

“The networks you find in the FIRE rankings are those that show persistent and long-lived malicious behavior,” Stone-Gross said.

The data that informs FIRE’s Top 20 comes from several anti-spam feeds, such as Spamcop, Phishtank, and includes data on malware-laden sites from Anubis and Wepawet, open-source tools that let users scan suspicious files and Web sites. Stone-Gross said the scoring is computed based on how many botnet command and control centers, phishing and malware exploit servers for drive-by downloads are at those ISPs, but only when those have been hosted at a given ISP over a certain number of days.

“The threshold is about a week. Basically you get points for each bad server you have, and then it’s scaled according to size,” he said. “We take the inverse of the network size (the approximate number of hosts) and multiple it by the aggregate sum of the network’s malicious activities.”

Stone-Gross said about half of the Top 20 are fairly static. “GigeNET, for example, seem to be leaders in hosting IRC botnets, and this has roughly been the case as long as we’ve been keeping track.” GigeNET did not return calls seeking comment.

Even compensating for size, FIRE lists some of the world’s largest ISPs and hosts conspicuously at the top (worst) of its badness index. However, FIRE’s findings are consistent with those that measure badness from other perspectives, and two major US-based networks show up time and again on most of these lists: Houston-based ThePlanet.com, and Plano, Texas based Softlayer Technologies.

Stone-Gross said a major contributor to the badness problem at many big hosts is the fact that most of their tenants are absentee landlords, some of whom have rented and sub-let their places out to itinerant riff-raff.

“What happens is they’ll have maybe a few hundred or even thousand resellers, and those resellers often sell to other resellers, and so on,” he said. “The upstream providers don’t like to shut them off right away, because that reseller might have one bad client out of 50, and they’re not law enforcement, and they don’t feel it’s their job to enforce these kinds of things.”

Sam Fleitman, chief operating officer at Softlayer, said the company has been trying to become more proactive in dealing with abuse issues on its network. Fleitman said his abuse team has been reaching out to a number of groups that measure Web reputation to see about getting direct feeds of their data.

“Most hosting companies are reactive…finding and getting rid of problems that are reported to them,” Fleitman said. “We want to be proactive, our goals are aligned, and so we’ve been trying to get that information in an automated way so we can take care of these things quicker.”

Indeed, not long after the UCSB team posted their FIRE statistics online, Softlayer approached the group to hear suggestions for reducing their ranking, Stone-Gross said.

“They came to us and said, ‘We’d like to improve that,’ so we had a talk with them and gave them a whole bunch of suggestions,” Stone-Gross said. “That was about three weeks ago, and they’ve since gone from being consistently in the Top 3 worst to usually much lower on the list.”

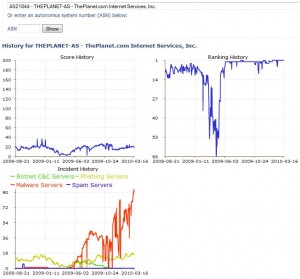

What’s probably most unique about FIRE’s approach is it allows users to view not just the volume of reported abuse issues at a given network, but also to drill down into specific examples and even chart the life of said abuse examples over time.

What’s probably most unique about FIRE’s approach is it allows users to view not just the volume of reported abuse issues at a given network, but also to drill down into specific examples and even chart the life of said abuse examples over time.

For instance, if you click this link you will see the reputation history for ThePlanet.com. The graphic in the upper right indicates that, aside from a brief period in the middle of 2009, ThePlanet has been at or near the top of the FIRE list for most of the last 18 months. Stone-Gross said that one gap corresponds to a time last April when the university’s servers crashed and stopped recording data for a few days.

Click on any historic points in the multicolored line graphs in the bottom left and you can view the IP addresses of phishing Web sites, malware and botnet servers on ThePlanet.com over that same time period, as recorded by UCSB.

ThePlanet’s Yvonne Donaldson declined to discuss FIRE numbers, the abuse longevity claims, or the company’s prevalence on eight out of ten of the reputation lists that flagged it as problematic. In a statement e-mailed to Krebs on Security, she said only that the company takes security very seriously, and that it takes action against customers that violate its acceptable use policies.

“When we find issues of a credible threat, we notify the appropriate authorities,” Donaldson wrote. “We may also take action by disabling or removing the site, and also notify customers if a specific site they are hosting is in violation.”

Brian;

Are you going to allow this kind of shameless spam? Just wondering. Some people probably think I spam because I like to promote good free security applications, but this is ridiculous!

I’ve already reported it to Brian ;o)

Thanks, Steven.